I'm unsure whether Artificial Intelligence (AI) is a ticking time bomb or an incredible machine capable of outsmarting humans and rendering us redundant in many roles. However, I'm concerned about the recent flurry of opinions on the risks and dangers associated with evolving AI, particularly large language models like ChatGPT4 and Bard. Just a few days ago, centibillionaire investor Warren Buffet compared the rise of AI to the creation of the atom bomb. Even Geoffrey Hinton, considered by many to be the Godfather of AI, issued a Mayday distress signal, quitting his decade-long job at Google to speak his mind on the dangers of explosive AI growth. The Godfather turned doomer believes AI poses a greater threat to humanity than climate change.

.jpg)

Tech moguls have rallied on the potential risks from faster-than-anticipated AI evolution. Google CEO Sundar Pichai and Apple co-founder Steve Wozniak, have admonished users on the flip side of AI and the dangers that can emanate from its misuse or manipulation. Maverick tech disruptor Elon Musk has even asked for a six-month moratorium on the development of newer AI systems, especially those more powerful than ChatGPT4.

The leaders aren’t dismayed without reasons. The concerns about AI are rooted in real risks. AI has the potential to begin a new dawn of symbiotic intelligence — of man and machine working together in ways that will lead to a renaissance of possibility and abundance. But it will replace and displace humans too. The World Economic Forum (WEF) estimates that AI will dislodge humans in 25 per cent of the incumbent jobs over the next five years. AI is potentially insidious and the rise of AI has only validated that it can be a double-edged sword.

AI systems collect copious data on individuals about their behaviour patterns and personal data that can be vulnerable to cyber-attacks, leading to privacy breaches and identity theft. Moreover, AI systems may be programmed with unconscious biases, leading to discriminatory decisions. For example, facial recognition algorithms have been shown to have higher error rates for people with darker skin tones. Also, the tribe of bad actors or rogue elements like cybercriminals and hacktivists can weaponize advanced AI systems to peddle misinformation or fan religious bigotry. That does not sound improbable as AI models like ChatGPT have already shown their prowess in developing human-like content across formats- text, images and videos.

Recently, I had the privilege of attending a workshop organized by the ICC National Women's Entrepreneurship Committee (INWEC). The workshop focused on empowering women entrepreneurs through the knowledge and applications of AI.

The event was graced by the presence of distinguished guests, including Tanaya Patnaik, Executive Director of the 'Sambad Group' and the Convenor of INWEC. Tanaya invited me to deliver a concise yet captivating overview of the revolutionary GPT model and its relevance to the world of women entrepreneurs. During my presentation, I dwelt on the remarkable ways in which GPT models can be harnessed to augment productivity, streamline intricate processes, and foster unwavering innovation across diverse realms of business. Also, I underscored the invaluable support that GPT models like ChatGPT can offer to women entrepreneurs, propelling them towards triumphant journeys of success.

But there are fears too and the question that springs from the fears is – Should we pause further AI research and development? That sounds like an absurd and unfeasible idea. Would you advocate banning the internet just because it spreads propaganda or misinformation? The genie of AI innovation has popped out of the bottle and it’s out in the open. There can be no let-up or dragging our feet on AI development. We have embraced a free society where our future is predicated on innovation in blockbuster technologies like AI. AI is meant to complement human efforts and make our lives easier, healthier and happier. There is no denying that AI has risks, but the rewards outweigh them.

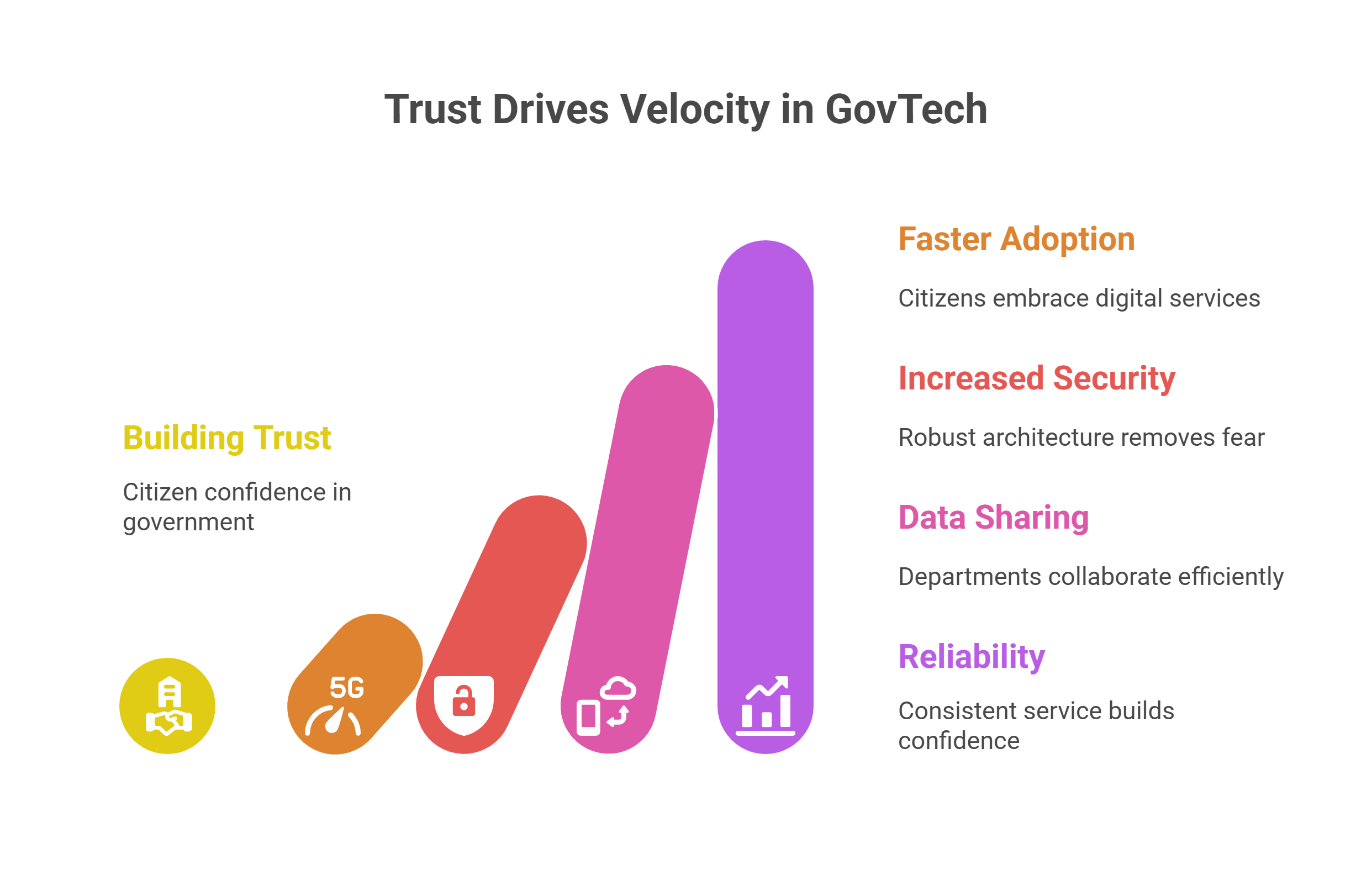

Having said that we can’t be mute when the wolf is nearing the door. There is a Call to Action (CTA) for all stakeholders to contain the upsurge of unethical AI. The good actors must coalesce ideas and synthesize efforts to thwart the designs of bad actors in the AI landscape. Some action has just taken off the ground. Lately, European Union lawmakers agreed on a set of proposals for Generative AI development. On top of it, US President Joe Biden and US Vice President Kamala Harris also held talks with many AI company leaders, including Sundar Pichai and ChatGPT-maker OpenAI's CEO Sam Altman at the White House.

The upsurge of AI brings it with attendant risks and it’s super important to navigate these risks encircling us. Our future strategy on AI should be centred on innovation tempered with mitigation. Investing in research and development can help to ensure that AI is developed responsibly and ethically. Besides, developing ethical frameworks for the use of AI can help to ensure that AI is used for the betterment of society and that its use is not harmful. Regulations can check the untrammeled rise in use cases – here, governments can work in synergy with corporations to strengthen the cause of AI governance. Finally, collaboration between different stakeholders is vital to navigate the risks associated with AI. This includes collaboration between policymakers, researchers, businesses, and civil society organizations. The best recipe to maximize rewards and minimize risks is to use AI responsibly, ethically and securely.

This blog was originally published in Priyadarshi Nanu Pany's LinkedIn account.

We will verify and publish your comment soon.